Where? IMAG Building, Grenoble Campus, France (Google Map link)

When? February 19th-23rd, 2024

What? Learn from outstanding speakers & discussions in HRI

For whom? Master & PhD students, young researchers & engineers

Cost? For free. BUT, we ask that if you register, you come.

For any question: soraim [at] inria [dot] fr

Thank you to all of 2024’s speakers and participants!

We hope to bring to you a 2nd edition in the near future.

Full programme with abstracts here, All sessions replay available here

SoRAIM Winter School Partners

What is SoRAIM?

The SoRAIM multi-disciplinary winter school combines topics in social robotics, artificial intelligence, and multimedia. Several top-level invited speakers will introduce and discuss all relevant areas for building socially aware robots that communicate and interact with humans in a shared space. Lectures will cover the following topics:

- Speech source localization and separation

- Mapping and visual self-localization

- Social-aware robot navigation

- Tracking and analysis of human behavior

- Dialog management, natural language understanding, and generation

- Robotic middle-ware and software integration

- Ethics and experimental design

SoRAIM aims to foster discussion between experts in these fields and to expose young researchers and engineers to highly qualified scientists and experts. SoRAIM is organized by the European H2020 SPRING project, which investigates social robotics for multiparty interactions in gerontological healthcare. It will provide opportunity to interact and discuss with several members of the project, to present your own research in the form of a poster, and to participate in wider topic discussions with your peers.

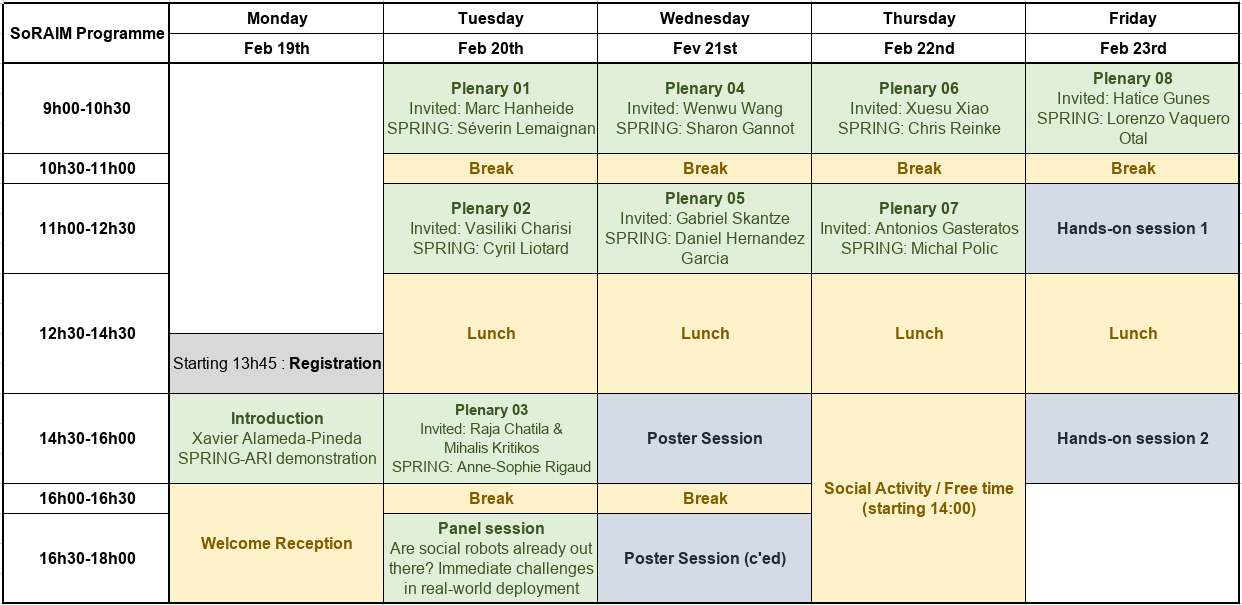

Programme

Sessions, Keynote Talks, and Materials

We are excited to introduce the following keynote speakers together with the title and a short abstract of the courses they will teach at SoRAIM.

Introduction & Demo

Plenary 01: Autonomous Robots: Adaptation and Software Integration

SPRING’s software architecture [Slides, Recording]

Dr. Séverin Lemaignan, PAL Robotics

Autonomous Robots in the Wild – Adapting from and for Interaction [Slides, Recording]

Prof. Marc Hanheide, University of Lincoln (@MarcHanheide)

Abstract: Robots that are released “into the wild” are moving away from the controlled environments and laboratories in which they were developed. They are now forced to contend with constantly changing environments and uncertainty, learn on the job, and adapt continuously and over the long term. The paradigm of long-term autonomy and adaption is what enables the deployment of robots away from factory floors and warehouses where they already are omnipresent into equally challenging and promising application domains. In the winter school course, we will look at how robots can “survive” (operate reliably and effectively) in dynamic environments, ranging from agricultural fields to museums, experiencing slow changes due to seasons and acute uncertainty from interaction with humans. We will look at selected recent robotic developments in mapping, navigation, interaction, and perception and discuss the challenges and opportunities of deploying autonomous robots in the wild.

Bio: Marc Hanheide is a Professor of Intelligent Robotics & Interactive Systems in the School of Computer Science at the University of Lincoln, UK, and the director of the University’s cross-disciplinary research centre in Robotics, the Lincoln Centre for Autonomous Systems (L-CAS). He received the Diploma in computer science from Bielefeld University, Germany, in 2001 and the Ph.D. degree (Dr.-Ing.) also in computer science also from Bielefeld University in 2006. In 2001, he joined the Applied Informatics Group at the Technical Faculty of Bielefeld University. From 2006 to 2009 he held a position as a senior researcher in the Applied Computer Science Group. From 2009 until 2011, he was a research fellow at the School of Computer Science at the University of Birmingham, UK. Marc Hanheide is a PI in many national and international research projects, funded by H2020, EPSRC, InnovateUK, DFG, industry partners, and others, as well as the director of the EPSRC Centre for Doctoral Training (CDT) in Doctoral Training in Agri-Food Robotics (AgriFoRwArdS). The STRANDS, ILIAD, RASberry, and NCNR projects are among the bigger projects he is or was involved with. In all his work, he researches autonomous robots, human-robot interaction, interaction-enabling technologies, and system architectures. Marc Hanheide specifically focuses on aspects of long-term robotic behaviour and human-robot interaction and adaptation. His work contributes to robotic applications in care, logistics, nuclear decommissioning, security, agriculture, museums, and general service robotics. He features regularly in public media, has published more than 100 peer-reviewed articles, and is actively engaged in promoting the public understanding of science through appearances in dedicated events, media appearances, and public lectures.

Bio: Marc Hanheide is a Professor of Intelligent Robotics & Interactive Systems in the School of Computer Science at the University of Lincoln, UK, and the director of the University’s cross-disciplinary research centre in Robotics, the Lincoln Centre for Autonomous Systems (L-CAS). He received the Diploma in computer science from Bielefeld University, Germany, in 2001 and the Ph.D. degree (Dr.-Ing.) also in computer science also from Bielefeld University in 2006. In 2001, he joined the Applied Informatics Group at the Technical Faculty of Bielefeld University. From 2006 to 2009 he held a position as a senior researcher in the Applied Computer Science Group. From 2009 until 2011, he was a research fellow at the School of Computer Science at the University of Birmingham, UK. Marc Hanheide is a PI in many national and international research projects, funded by H2020, EPSRC, InnovateUK, DFG, industry partners, and others, as well as the director of the EPSRC Centre for Doctoral Training (CDT) in Doctoral Training in Agri-Food Robotics (AgriFoRwArdS). The STRANDS, ILIAD, RASberry, and NCNR projects are among the bigger projects he is or was involved with. In all his work, he researches autonomous robots, human-robot interaction, interaction-enabling technologies, and system architectures. Marc Hanheide specifically focuses on aspects of long-term robotic behaviour and human-robot interaction and adaptation. His work contributes to robotic applications in care, logistics, nuclear decommissioning, security, agriculture, museums, and general service robotics. He features regularly in public media, has published more than 100 peer-reviewed articles, and is actively engaged in promoting the public understanding of science through appearances in dedicated events, media appearances, and public lectures.

Plenary 02: Experimental Robotics: from Results to Policies

Experimental Validation of the SPRING-ARI robotic platform [Slides, Recording]

Cyril Liotard, ERM Automatismes

AI and Children’s Rights: Lessons Learnt from the Implementation of the UNICEF Policy Guidance to Social Robots for Children [Slides, Recording]

Dr. Vasiliki Charisi, University College London

Abstract: The rapid development of Artificial Intelligence (AI) places children in a constantly changing environment with new applications that utilize Generative AI, Social Robots and Virtual Reality impacting their online and offline lives. To ensure the development of responsible AI for children, UNICEF developed a Policy Guidance on AI and Children’s Rights, drawing on inputs from international experts, governments, industry and children and invited organizations and industry to pilot their implementation in different kinds of applications. In this talk, I will describe the process of piloting some of the proposed recommendations in a social robot for children. The talk will tackle the following three aspects: A. How can recommendations and guidelines for AI and Children’s Rights be embedded in industry strategies and translated into concrete technical specifications for AI products that protect children’s privacy, safety, cyber-security, inclusion and non-discrimination and their rights in the digital world? B. How can we ensure children’s inclusion in the whole cycle of product design, development, implementation, and evaluation? C. How can governments and intergovernmental institutions support a transparent interaction between policy, industry, and civil society and ensure accountability?

Bio: Vicky Charisi is a Research Scientist at the European Commission, Joint Research Centre, Unit of Digital Transformation, Digital Economy and Society and at the EU Policy Lab. She studies the impact of Artificial Intelligence on Human Behaviour, and her research supports policymaking at the European Commission and internationally. She is particularly interested in understanding how interactive and intelligent systems, including social robots, affect human cognitive development, such as the processes of structure emergence especially in early childhood and how social interactions affect development. At the same time, she works on ethical considerations in the design and development of social robots and she has ongoing collaborations with UNICEF, United Nations ITU, IEEE Standards Association and other organizations for the consideration of human rights in the design of robots for children. In addition, her work tries to understand cross-cultural differences in the perception of fairness and she conducts studies in Europe, Africa and Asia. Vicky finished her Ph.D. studies at the UCL Institute of Education and worked at the University of Twente in the Human-Media Interaction group. Vicky has published more than 60 scientific publications and science-for-policy reports, and she serves as a Chair of the IEEE Computational Intelligence Society, Cognitive and Developmental Systems, TF of Human-Robot Interaction.

Bio: Vicky Charisi is a Research Scientist at the European Commission, Joint Research Centre, Unit of Digital Transformation, Digital Economy and Society and at the EU Policy Lab. She studies the impact of Artificial Intelligence on Human Behaviour, and her research supports policymaking at the European Commission and internationally. She is particularly interested in understanding how interactive and intelligent systems, including social robots, affect human cognitive development, such as the processes of structure emergence especially in early childhood and how social interactions affect development. At the same time, she works on ethical considerations in the design and development of social robots and she has ongoing collaborations with UNICEF, United Nations ITU, IEEE Standards Association and other organizations for the consideration of human rights in the design of robots for children. In addition, her work tries to understand cross-cultural differences in the perception of fairness and she conducts studies in Europe, Africa and Asia. Vicky finished her Ph.D. studies at the UCL Institute of Education and worked at the University of Twente in the Human-Media Interaction group. Vicky has published more than 60 scientific publications and science-for-policy reports, and she serves as a Chair of the IEEE Computational Intelligence Society, Cognitive and Developmental Systems, TF of Human-Robot Interaction.

Plenary 03: Ethics-Ready Robotics or Robot-Ready Ethics?

Ethics and Robot Acceptance in a Day-care Hospital [Slides, Recording]

Prof. Anne-Sophie Rigaud, Assistance Publique – Hôpitaux de Paris

Ethically Aligned Design for Social Robotics [Slides, Recording]

Prof. Raja Chatila, Sorbonne Université (@raja_chatila)

Opportunities and challenges in putting AI ethics in practice: the role of the EU [Slides, Recording]

Dr. Mihalis Kritikos, Ethics and Integrity Sector of the European Commission (DG-RTD)

Abstract: Social robots are designed to interact with humans. Humans are accostumed to interact with other humans of whom they have some understanding and of whom they expect some behavior, and with whom they share some common grounds and background knowledge. Humans also usually share common values among them, and have an undersrtand of the core values of other humans. Building robots that use human knowledge, have human shape and appearance, and interact in a human-like manner is usually justified by the objective of making the interaction more natural for humans. But at the same time this makes humans engage with a machine which actually doesn’t have any understanding of the mentioned features about human-human interactions. It could at the most give an illusion thereof – which is in itself an ethical issue. Prior to any deployment of social robots with humans it is key to understand these factors and to design robots according to a methodology that enables some alignment with human values such as privacy, intimacy autonomy, dignity, etc., which have various concrete expressions depending on context. This ethically aligned design approach must be achieved prior to any technical design, throughout the design process itself, and robot deployment, considering robot users as welll all impacted stakeholders, including society itlsef. Their values must be analyzed, their tensions identified and design choices must then be evaluated and made on the basis of these analyses, then revised according to deployment evaluation.

Bio: Raja Chatila is Professor emeritus at Sorbonne Université. He is former Director of the Institute of Intelligent Systems and Robotics (ISIR) and of the Laboratory of Excellence “SMART” on human-machine interaction. He was director of LAAS-CNRS, Toulouse France, in 2007-2010. His research covers several aspects of Robotics in robot navigation and SLAM, motion planning and control, cognitive and control architectures, human-robot interaction, machine learning, and ethics. He works on robotics projects in the areas of service, field, aerial and space robotics. He is author of over 170 international publications on these topics. Current and recent projects: HumanE AI Net the network of excellence of AI centers in Europe, AI4EU promoting AI in Europe, AVETHICS on the ethics of automated vehicle decisions, Roboergosum on robot self-awareness and Spencer on human-robot interaction in populated environments. He was President of the IEEE Robotics and Automation Society for the term 2014-2015. He is co-chair of the Responsible AI Working group in the Global Partnership on AI (GPAI) and member of the French National Pilot Committee for Digital Ethics (CNPEN). He is chair of the IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems. He was member of the High Level Expert Group in AI with the European Commission (HLEG-AI). Honors: IEEE Fellow, IEEE Pioneer Award in Robotics and Automation, Honorary Doctor of Örebro University (Sweden).

Bio: Raja Chatila is Professor emeritus at Sorbonne Université. He is former Director of the Institute of Intelligent Systems and Robotics (ISIR) and of the Laboratory of Excellence “SMART” on human-machine interaction. He was director of LAAS-CNRS, Toulouse France, in 2007-2010. His research covers several aspects of Robotics in robot navigation and SLAM, motion planning and control, cognitive and control architectures, human-robot interaction, machine learning, and ethics. He works on robotics projects in the areas of service, field, aerial and space robotics. He is author of over 170 international publications on these topics. Current and recent projects: HumanE AI Net the network of excellence of AI centers in Europe, AI4EU promoting AI in Europe, AVETHICS on the ethics of automated vehicle decisions, Roboergosum on robot self-awareness and Spencer on human-robot interaction in populated environments. He was President of the IEEE Robotics and Automation Society for the term 2014-2015. He is co-chair of the Responsible AI Working group in the Global Partnership on AI (GPAI) and member of the French National Pilot Committee for Digital Ethics (CNPEN). He is chair of the IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems. He was member of the High Level Expert Group in AI with the European Commission (HLEG-AI). Honors: IEEE Fellow, IEEE Pioneer Award in Robotics and Automation, Honorary Doctor of Örebro University (Sweden).

Abstract: The talk will shed light on the importance of establishing a distinct Ethics organizational/conceptual approach for research projects funded in the area of digital technologies, to enable the development of a human-centric, trustworthy and robust digital research ecosystem. To this end, for Horizon Europe, a first set of specialized guidance notes has been produced followed by several other organizational modalities. Given the novelty of this set of technologies from a research ethics governance perspective, the talk will discuss the particular needs for guidance, education, training and development of expertise. Furthermore, the challenges that are associated with the upcoming adoption of the EU Artificial Intelligence Act and its possible impact upon the design and implementation of research projects will be discussed. Particular attention will also be given to other EC initiatives in this field and to the work of other international organisations.

Bio: Mihalis Kritikos is a Policy Analyst at the Ethics and Integrity Sector of the European Commission (DG-RTD) working on the ethical development of emerging technologies with a special emphasis on AI Ethics and a Senior Associate Fellow at the Brussels School of Governance (Centre for Digitilisation, Democracy and Innovation). Before that, he worked at the Scientific Foresight Service of the European Parliament as a legal/ethics advisor on Science and Technology issues (STOA/EPRS) authoring more than 50 publications in the domain of new and emerging technologies and contributing to the drafting of more than 15 European Parliament reports/resolutions in the fields of artificial intelligence, robots, distributed ledger technologies and blockchains, precision farming, gene editing and disruptive innovation. He has worked as a Senior Associate in the EU Regulatory and Environment Affairs Department of White and Case, as a Lecturer at several UK Universities and as a Lecturer/Project Leader at the European Institute of Public Administration (EIPA).

Bio: Mihalis Kritikos is a Policy Analyst at the Ethics and Integrity Sector of the European Commission (DG-RTD) working on the ethical development of emerging technologies with a special emphasis on AI Ethics and a Senior Associate Fellow at the Brussels School of Governance (Centre for Digitilisation, Democracy and Innovation). Before that, he worked at the Scientific Foresight Service of the European Parliament as a legal/ethics advisor on Science and Technology issues (STOA/EPRS) authoring more than 50 publications in the domain of new and emerging technologies and contributing to the drafting of more than 15 European Parliament reports/resolutions in the fields of artificial intelligence, robots, distributed ledger technologies and blockchains, precision farming, gene editing and disruptive innovation. He has worked as a Senior Associate in the EU Regulatory and Environment Affairs Department of White and Case, as a Lecturer at several UK Universities and as a Lecturer/Project Leader at the European Institute of Public Administration (EIPA).

Plenary 04: Audio-Visual Perception, the Robo-Centric Case

Robust Audio-Visual Perception of Humans [Slides, Recording]

Prof. Sharon Gannot, Bar-Ilan University

Audio-Visual Speech Source Separation and Speaker Tracking [Slides, Recording]

Prof. Wenwu Wang, University of Surrey (@wang_wenwu)

Abstract: In complex room settings, machine listening systems may experience a decline in performance due to factors like room reverberations, background noise, and unwanted sounds. Concurrently, machine vision systems can suffer from issues like visual occlusions, insufficient lighting, and background clutter. Combining audio and visual data has the potential to overcome these limitations and enhance machine perception in complex audio-visual environments. In this talk, we will showcase selected works related to audio-visual speech separation and speaker tracking. This encompasses the fusion of audio-visual data for speech source separation, employing techniques such as Gaussian mixture models, dictionary learning, and deep learning. In addition, we will explore the integration of audio-visual information for speaker tracking and localization, utilizing methods such as PHD filtering, particle flow, and deep learning. We will also provide insights into our ongoing advancements in this field, particularly in the context of ego-centric scenarios.

Bio: Wenwu Wang is a Professor in Signal Processing and Machine Learning, and a Co-Director of the Machine Audition Lab within the Centre for Vision Speech and Signal Processing, University of Surrey, UK. He is also an AI Fellow at the Surrey Institute for People Centred Artificial Intelligence. His current research interests include signal processing, machine learning and perception, artificial intelligence, machine audition (listening), and statistical anomaly detection. He has (co)-authored over 350 papers in these areas. He has been involved as Principal or Co-Investigator in more than 30 research projects, funded by UK and EU research councils, and industry (e.g. BBC, NPL, Samsung, Tencent, Huawei, Saab, Atlas, and Kaon). He is a (co-)recipient of over 15 awards including the IEEE Signal Processing Society 2022 Young Author Best Paper Award, ICAUS 2021 Best Paper Award, DCASE 2020 and 2023 Judge’s Award, DCASE 2019 and 2020 Reproducible System Award, and LVA/ICA 2018 Best Student Paper Award. He is the elected Chair of IEEE Signal Processing Society (SPS) Machine Learning for Signal Processing Technical Committee, the Vice Chair of the EURASIP Technical Area Committee on Acoustic Speech and Music Signal Processing, a Board Member of IEEE SPS Technical Directions Board, an elected Member of the IEEE SPS Signal Processing Theory and Methods Technical Committee, and an elected Member of the International Steering Committee of Latent Variable Analysis and Signal Separation. He is an Associate Editor (2020-2025) for IEEE/ACM Transactions on Audio Speech and Language Processing. He was a Senior Area Editor (2019-2023) and an Associate Editor (2014-2018) for IEEE Transactions on Signal Processing.

Bio: Wenwu Wang is a Professor in Signal Processing and Machine Learning, and a Co-Director of the Machine Audition Lab within the Centre for Vision Speech and Signal Processing, University of Surrey, UK. He is also an AI Fellow at the Surrey Institute for People Centred Artificial Intelligence. His current research interests include signal processing, machine learning and perception, artificial intelligence, machine audition (listening), and statistical anomaly detection. He has (co)-authored over 350 papers in these areas. He has been involved as Principal or Co-Investigator in more than 30 research projects, funded by UK and EU research councils, and industry (e.g. BBC, NPL, Samsung, Tencent, Huawei, Saab, Atlas, and Kaon). He is a (co-)recipient of over 15 awards including the IEEE Signal Processing Society 2022 Young Author Best Paper Award, ICAUS 2021 Best Paper Award, DCASE 2020 and 2023 Judge’s Award, DCASE 2019 and 2020 Reproducible System Award, and LVA/ICA 2018 Best Student Paper Award. He is the elected Chair of IEEE Signal Processing Society (SPS) Machine Learning for Signal Processing Technical Committee, the Vice Chair of the EURASIP Technical Area Committee on Acoustic Speech and Music Signal Processing, a Board Member of IEEE SPS Technical Directions Board, an elected Member of the IEEE SPS Signal Processing Theory and Methods Technical Committee, and an elected Member of the International Steering Committee of Latent Variable Analysis and Signal Separation. He is an Associate Editor (2020-2025) for IEEE/ACM Transactions on Audio Speech and Language Processing. He was a Senior Area Editor (2019-2023) and an Associate Editor (2014-2018) for IEEE Transactions on Signal Processing.

Plenary 05: Multi-Party Conversational Robots

Multi-User Spoken Conversations with Robots [Slides, Recording]

Dr. Daniel Hernandez Garcia, Heriot-Watt University

Predictive modelling of turn-taking in human-robot interaction [Slides, Recording]

Prof. Gabriel Skantze, KTH Stockholm (@GabrielSkantze)

Abstract: Conversational interfaces, in the form of voice assistants, smart speakers, and social robots are becoming ubiquitous. This development is partly fueled by the recent developments in large language models. While this progress is very exciting, human-machine conversation is currently limited in many ways. In this talk, I will specifically address the modelling of conversational turn-taking. As current systems lack the sophisticated coordination mechanisms found in human-human interaction, they are often plagued by interruptions or sluggish responses. I will present our recent work on predictive modelling of turn-taking, which allows the system to not only react to turn-taking cues, but also predict upcoming turn-taking events and produce relevant cues to facilitate real-time coordination of spoken interaction.

Bio: Gabriel Skantze is a Professor in Speech Communication and Technology, with a specialization in Conversational Systems, at the Department of Speech Music and Hearing at KTH in Stockholm. His research studies human communication and computational models that allow computers and robots to have face-to-face conversations with humans. This involves both verbal and non-verbal (gaze, prosody, etc) aspects of communication, and his research involves phenomena such as turn-taking, feedback, joint attention, and language acquisition. Since social robots are likely to play an important role in our future society, the technology has direct applications, but it can also be used to increase our understanding of the mechanisms behind human communication. This requires an interdisciplinary approach, which includes language technology, artificial intelligence, machine learning, phonetics, and linguistics. In 2014, he co-founded the company Furhat Robotics (together with Samer Al Moubayed and Jonas Beskow at KTH) where he is working part-time as a Chief Scientist in the company. Gabriel is the President of SIGdial, the ACL (Association for Computational Linguistics) Special Interest Group on Discourse and Dialogue, Associate Editor for the Human-Robot Interaction section of Frontiers in Robotics and AI, and Action Editor for the ACL Rolling Review. He is an alumni member of the Young Academy of Sweden – an independent, cross-disciplinary forum for some of the most promising young researchers in Sweden in all disciplines.

Bio: Gabriel Skantze is a Professor in Speech Communication and Technology, with a specialization in Conversational Systems, at the Department of Speech Music and Hearing at KTH in Stockholm. His research studies human communication and computational models that allow computers and robots to have face-to-face conversations with humans. This involves both verbal and non-verbal (gaze, prosody, etc) aspects of communication, and his research involves phenomena such as turn-taking, feedback, joint attention, and language acquisition. Since social robots are likely to play an important role in our future society, the technology has direct applications, but it can also be used to increase our understanding of the mechanisms behind human communication. This requires an interdisciplinary approach, which includes language technology, artificial intelligence, machine learning, phonetics, and linguistics. In 2014, he co-founded the company Furhat Robotics (together with Samer Al Moubayed and Jonas Beskow at KTH) where he is working part-time as a Chief Scientist in the company. Gabriel is the President of SIGdial, the ACL (Association for Computational Linguistics) Special Interest Group on Discourse and Dialogue, Associate Editor for the Human-Robot Interaction section of Frontiers in Robotics and AI, and Action Editor for the ACL Rolling Review. He is an alumni member of the Young Academy of Sweden – an independent, cross-disciplinary forum for some of the most promising young researchers in Sweden in all disciplines.

Plenary 06: Social Robot Behavior Policies

Learning Robot Behaviour [Slides, Recording]

Dr. Chris Reinke, Inria @ Univ. Grenoble Alpes, (@ScienceReinke)

Human-Interactive Mobile Robots: from Learning to Deployment [Slides, Recording]

Prof. Xuesu Xiao, George Mason University (@XuesuXiao)

Abstract: During the transition from highly restricted workspaces such as factories and warehouses into complex and unstructured populated environments, mobile robots encounter both challenges and opportunities: On one hand, human-robot interactions in the wild are diverse and uncertain, necessitating mobile robots to reason about and act upon unwritten social norms; On the other hand, the variety of humans in the wild also provides a wealth of knowledge that robots can harness to enhance their adaptivity. In this winter school course, we will discuss methodologies for developing human-interactive mobile robots that efficiently learn from and harmoniously deploy among humans, with a focus on trustworthy and explainable real-world navigation systems: (1) To learn from (non-expert) humans, Adaptive Planner Parameter Learning (APPL) leverages simple human interaction modalities and fine-tunes existing motion planners; (2) To deploy in human-populated social spaces, two large-scale datasets, Socially Compliant Navigation Dataset (SCAND) and Multimodal Social Human Navigation Dataset (MuSoHu), allow mobile robots to learn social navigation using, e.g., inverse optimal control and a hybrid classical and learning-based paradigm.

Bio: Xuesu Xiao is an Assistant Professor in the Department of Computer Science at George Mason University. Xuesu (Prof. XX) directs the RobotiXX lab, in which researchers (XX-Men) and robots (XX-Bots) work together at the intersection of motion planning and machine learning with a specific focus on developing highly capable and intelligent mobile robots that are robustly deployable in the real world with minimal human supervision. Xuesu received his Ph.D. in Computer Science from Texas A&M University in 2019, Master of Science in Mechanical Engineering from Carnegie Mellon University in 2015, and dual Bachelor of Engineering in Mechatronics Engineering from Tongji University and FH Aachen University of Applied Sciences in 2013. His research has been featured by Google AI Blog, IEEE Spectrum, US Army, Robotics Business Review, Tech Briefs, and WIRED. He serves as an Associate Editor for IEEE Robotics and Automation Letters, International Conference on Robotics and Automation, International Conference on Intelligent Robots and Systems, and International Symposium on Safety, Security, and Rescue Robotics.

Bio: Xuesu Xiao is an Assistant Professor in the Department of Computer Science at George Mason University. Xuesu (Prof. XX) directs the RobotiXX lab, in which researchers (XX-Men) and robots (XX-Bots) work together at the intersection of motion planning and machine learning with a specific focus on developing highly capable and intelligent mobile robots that are robustly deployable in the real world with minimal human supervision. Xuesu received his Ph.D. in Computer Science from Texas A&M University in 2019, Master of Science in Mechanical Engineering from Carnegie Mellon University in 2015, and dual Bachelor of Engineering in Mechatronics Engineering from Tongji University and FH Aachen University of Applied Sciences in 2013. His research has been featured by Google AI Blog, IEEE Spectrum, US Army, Robotics Business Review, Tech Briefs, and WIRED. He serves as an Associate Editor for IEEE Robotics and Automation Letters, International Conference on Robotics and Automation, International Conference on Intelligent Robots and Systems, and International Symposium on Safety, Security, and Rescue Robotics.

Plenary 07: Sensing the Environment: from Detecting Falls to Robot Self-Localisation

Environment Mapping, Self-localisation, and Simulation [Slides, Recording]

Dr. Michal Polic, Czech Technical University in Prague

Human-presence modeling and social navigation of an assistive robot solution for detection of falls and elderly’s support [Slides, Recording]

Prof. Antonios Gasteratos, Democritus University of Thrace (@gasteratos)

Abstract: As the world’s population ages, there is an increasing demand for intelligent systems that can facilitate individuals’ independence while ensuring their safety. Assistive robotics has emerged as a viable tool in this context, providing personalized help and autonomy. We present the design and implementation of an assisted living robot tailored to the fall detection challenge. By leveraging robust SLAM, human-aware navigation and fall detection algorithms, we aim to present a safe and effective, real-time and comprehensive robotic strategy to detect and act in fall detection events. A developed robotic platform is demonstrated and assessed in practical assisted living scenarios. Finally, contemporary research methodologies, potential ideas and challenges of social aware robot navigation are discussed.

Bio: Antonios Gasteratos (FIET) is a Full Professor of Robotics, Mechatronics and Computer Vision at Democritus University of Thrace, Dean of the School of Engineering and Director of the Laboratory of Robotics and Automation. He holds a MEng. and a PhD in Electrical and Computer Engineering, Democritus University of Thrace (1994 and 1999, respectively). During the past 20 years he has been principal investigator to several projects funded mostly by the European Commission, the European Space Agency, the Greek Secretariat for Research and Technology, Industry and other sources, which were mostly related to robotics and vision. He has published over 300 papers in peer reviewed journals and international conferences and written 4 textbooks in Greek. He is Subject Editor-in-Chief in Electronics Letters and Assoc. Editor at the Expert Systems with Applications, International Journal of Optomechatronics, International Journal of Advanced Robotics Systems. He is also evaluator of projects supported by the European Commission and other funding agencies, as well as reviewer in many international journals in the field of Computer Vision and Robotics. Antonios Gasteratos has been a member of programme committees of international conferences and chairman and co-chairman of international conferences and workshops.

Bio: Antonios Gasteratos (FIET) is a Full Professor of Robotics, Mechatronics and Computer Vision at Democritus University of Thrace, Dean of the School of Engineering and Director of the Laboratory of Robotics and Automation. He holds a MEng. and a PhD in Electrical and Computer Engineering, Democritus University of Thrace (1994 and 1999, respectively). During the past 20 years he has been principal investigator to several projects funded mostly by the European Commission, the European Space Agency, the Greek Secretariat for Research and Technology, Industry and other sources, which were mostly related to robotics and vision. He has published over 300 papers in peer reviewed journals and international conferences and written 4 textbooks in Greek. He is Subject Editor-in-Chief in Electronics Letters and Assoc. Editor at the Expert Systems with Applications, International Journal of Optomechatronics, International Journal of Advanced Robotics Systems. He is also evaluator of projects supported by the European Commission and other funding agencies, as well as reviewer in many international journals in the field of Computer Vision and Robotics. Antonios Gasteratos has been a member of programme committees of international conferences and chairman and co-chairman of international conferences and workshops.

Understanding Human Behavior for Mental Wellbeing Robotic Coaches

Multi-Modal Human Behaviour Understanding [Slides, Recording]

Dr. Lorenzo Vaquero Otal, University of Trento

Robotic Coaches for Mental Wellbeing: From the Lab to the Real World [Slides, Recording]

Prof. Hatice Gunes, University of Cambridge (@HatijeGyunesh)

Abstract: In recent years, the field of socially assistive robotics for promoting wellbeing has witnessed a notable surge in research activity. It is increasingly recognized within the realms of social robotics and human-robot interaction (HRI) that robots have the potential to function as valuable instruments for evaluating, sustaining, and enhancing various aspects of human wellbeing, including physical, mental, and emotional health. At the Cambridge Affective Intelligence and Robotics Lab (https://cambridge-afar.github.io/), our work on creating robotic coaches for mental wellbeing started in 2019 with a 5-year funding from the UK Engineering and Physical Sciences Research Council (EPSRC). Since then, we have engaged in a series of studies, employing an iterative approach that integrates user-centric design, testing, and deployment in both controlled laboratory settings and real-world contexts, while learning from failure and mistakes and striving to continuously improve our robotic coaches. We have done this by 1) collaborating with experienced human coaches and professionals who currently deliver these interventions, 2) gaining insights into the expectations and perceptions of potential users, and collecting valuable feedback from them, and 3) developing real-time AI and data-driven affective adaptation mechanisms for longitudinal deployment. In this talk, I will share our journey in developing robotic coaches for mental wellbeing and transitioning them from the controlled lab environment to real-world settings, and will illustrate the challenges and opportunities of social robotics for promoting wellbeing with a number of case studies, with insights for short- and long-term adaptation, and highlight the perceptions and expectations of prospective users to guide future research in this area.

Bio: Hatice Gunes is a Professor of Affective Intelligence and Robotics (AFAR) and the Director of the AFAR Lab at the University of Cambridge’s Department of Computer Science and Technology. Her expertise is in the areas of affective computing and social signal processing cross-fertilising research in multimodal interaction, computer vision, machine learning, social robotics and human-robot interaction. She has published over 165 papers in these areas (H-index=37, citations > 7,700), with most recent works on bias mitigation and fairness for affective computing, multiple appropriate facial reaction generation, graph representation for personality recognition, lifelong and continual learning for facial expression recognition and affective robotics, and longitudinal HRI for wellbeing. She has served as an Associate Editor for IEEE Transactions on Affective Computing, IEEE Transactions on Multimedia, and Image and Vision Computing Journal, and has guest edited many Special Issues, the latest ones being 2022-23 Int’l Journal of Social Robotics Special Issue on Embodied Agents for Wellbeing, 2021-22 Frontiers in Robotics and AI Special Issue on Lifelong Learning and Long-Term Human-Robot Interaction, and 2020-21 IEEE Transactions on Affective Computing Special Issue on Automated Perception of Human Affect from Longitudinal Behavioural Data. Other research highlights include Outstanding PC Award at ACM/IEEE HRI’23, RSJ/KROS Distinguished Interdisciplinary Research Award Finalist at IEEE RO-MAN’21, Distinguished PC Award at IJCAI’21, Best Paper Award Finalist at IEEE RO-MAN’20, Finalist for the 2018 Frontiers Spotlight Award, Outstanding Paper Award at IEEE FG’11, and Best Demo Award at IEEE ACII’09. Prof Gunes is the former President of the Association for the Advancement of Affective Computing (2017-2019), is/was the General Co-Chair of ACM ICMI’24 and ACII’19, and the Program Co-Chair of ACM/IEEE HRI’20 and IEEE FG’17. She was the Chair of the Steering Board of IEEE Transactions on Affective Computing (2017-2019) and was a member of the Human-Robot Interaction Steering Committee (2018-2021. Her research has been supported by various competitive grants, with funding from Google, the Engineering and Physical Sciences Research Council UK (EPSRC), Innovate UK, British Council, Alan Turing Institute and EU Horizon 2020. In 2019 she was awarded a prestigious EPSRC Fellowship to investigate adaptive robotic emotional intelligence for wellbeing (2019-2025) and has been named a Faculty Fellow of the Alan Turing Institute – UK’s national centre for data science and artificial intelligence (2019-2021). Prof Gunes is a Staff Fellow of Trinity Hall, a Senior Member of the IEEE, and a member of the AAAC.

Bio: Hatice Gunes is a Professor of Affective Intelligence and Robotics (AFAR) and the Director of the AFAR Lab at the University of Cambridge’s Department of Computer Science and Technology. Her expertise is in the areas of affective computing and social signal processing cross-fertilising research in multimodal interaction, computer vision, machine learning, social robotics and human-robot interaction. She has published over 165 papers in these areas (H-index=37, citations > 7,700), with most recent works on bias mitigation and fairness for affective computing, multiple appropriate facial reaction generation, graph representation for personality recognition, lifelong and continual learning for facial expression recognition and affective robotics, and longitudinal HRI for wellbeing. She has served as an Associate Editor for IEEE Transactions on Affective Computing, IEEE Transactions on Multimedia, and Image and Vision Computing Journal, and has guest edited many Special Issues, the latest ones being 2022-23 Int’l Journal of Social Robotics Special Issue on Embodied Agents for Wellbeing, 2021-22 Frontiers in Robotics and AI Special Issue on Lifelong Learning and Long-Term Human-Robot Interaction, and 2020-21 IEEE Transactions on Affective Computing Special Issue on Automated Perception of Human Affect from Longitudinal Behavioural Data. Other research highlights include Outstanding PC Award at ACM/IEEE HRI’23, RSJ/KROS Distinguished Interdisciplinary Research Award Finalist at IEEE RO-MAN’21, Distinguished PC Award at IJCAI’21, Best Paper Award Finalist at IEEE RO-MAN’20, Finalist for the 2018 Frontiers Spotlight Award, Outstanding Paper Award at IEEE FG’11, and Best Demo Award at IEEE ACII’09. Prof Gunes is the former President of the Association for the Advancement of Affective Computing (2017-2019), is/was the General Co-Chair of ACM ICMI’24 and ACII’19, and the Program Co-Chair of ACM/IEEE HRI’20 and IEEE FG’17. She was the Chair of the Steering Board of IEEE Transactions on Affective Computing (2017-2019) and was a member of the Human-Robot Interaction Steering Committee (2018-2021. Her research has been supported by various competitive grants, with funding from Google, the Engineering and Physical Sciences Research Council UK (EPSRC), Innovate UK, British Council, Alan Turing Institute and EU Horizon 2020. In 2019 she was awarded a prestigious EPSRC Fellowship to investigate adaptive robotic emotional intelligence for wellbeing (2019-2025) and has been named a Faculty Fellow of the Alan Turing Institute – UK’s national centre for data science and artificial intelligence (2019-2021). Prof Gunes is a Staff Fellow of Trinity Hall, a Senior Member of the IEEE, and a member of the AAAC.

Panel: Are social robots already out there? Immediate challenges in real-world deployment.

Panelists: Vicky Charisi, Raja Chatila, Mihalis Kritikos, Severin Lemaignan, Cyril Liotard, Anne-Sophie Rigaud

Abstract: Challenges remain numerous for the deployment of actual social robots in our everyday lifes (at work, at home)

On the technical side, because robotic platforms are subject to certain hardware and software constraints. On the hardware side, because sensors and actuators are restricted in size, power and performance, since the physical space and the battery capacity are also limited. On the software side, because large models can be used if lots of computing resources are permanently available, which is not always the case, since they need to be shared between the various computing modules. Finally on the regulatory and legal side, because the rise of AI use is fast and needs to be balanced with ethical views that adress our society’s needs; but the construction of proper laws, norms and their ackowledgement and understanding by stakeholders is slow. In this session the panellists will survey all aspects of the problems at hand and provide you with an overview of the challenges that you as future scientists will need to solve in order to take social robots out of the labs and into the world!

Posters

Poster #01: The Social Bench Tool to study Child-Robot Interaction (Francesca Cocchella, Italian Institute of Technology / University of Genoa)

Poster #02: Large Language Models in Social Robots: The Key to Open Unconstrained Human-Robot Conversations? (Maria Pinto, Ghent University)

Poster #03: Adaptive second language tutoring through generative AI and social robots (Eva Verhelst, Ghent University)

Poster #04: Design Space Model for Robots Supporting Trust of Children and Older Adults in Wellness Contexts (Chia-Hsin Wu, Tampere University)

Poster #05: Goes to the Heart: Speaking the User’s Native Language (Shaul Ashkenazi, University of Glasgow)

Poster #06: Co-designing Conversational Agents for the Elderly: A Comprehensive Review (Sidonie Salomé, Université Grenoble Alpes)

Poster #07: Improvement of real-world dialogue recognition and capabilities of social robots (Andrew Blair, University of Glasgow)

Poster #08: Towards a Definition of Awareness for Embodied AI (Giulio Antonio Abbo, Ghent University)

Poster #09: Sound Source Localization and Tracking in Complex Acoustic Scenes (Taous Iatariene, Université de Lorraine)

Poster #10: A probabilistic approach for learning and adapting shared control skills with the human in the loop (Gabriel Quere, German Aerospace Center (DLR) – U2IS, IP Paris)

Poster #11: Musical Robot for People with Dementia (Paul Raingeard de la Bletiere, TU Delft)

Poster #12: Univariate Radial Basis Function Layers: Brain-inspired Deep Neural Layers for Low-Dimensional Inputs (Daniel Jost, INRIA @ UGA)

Poster #13: Mixture of Dynamical Variational Autoencoders for Multi-Source Trajectory Modeling and Separation (Xiaoyu Lin, INRIA @ UGA)

Poster #14: Preference-Based Reinforcement Learning for Social Robotics (Anand Ballou, INRIA @ UGA)

Poster #15: Speech Modeling with a Hierarchical Transformer Dynamical VAE (Xiaoyu Lin, INRIA @ UGA)

Hands-on Sessions

On Friday there will be hands-on sessions on the following topics. Each participant can join 2 sessions (morning and afternoon).

- Robot navigation with Reinforcement Learning

- ROS4HRI: How to represent and reason about humans with ROS

- Building a conversational system with LLMs using prompt engineering

- Robot self-localisation based on camera images

- Speaker extraction from microphone recordings

For the sessions, please bring your own laptop. We will prepare soon some instructions how to prepare the software.

Organisers

Organising Committee: Xavier Alameda-Pineda (Inria), Matthieu Py (Inria), Chris Reinke (Inria)

Scientific Committee: Xavier Alameda-Pineda (Inria), Chris Reinke (Inria), Tomas Pajdla (CVUT), Elisa Ricci (UNITN), Sharon Gannot (BIU), Oliver Lemon (HWU), Cyril Liotard (ERM Automatismes Industriels), Séverain Lemaignan (PAL Robotics), Maribel Pino (AP-HP).

Outreach Committee: Alex Auternaud (Inria), Victor Sanchez (Inria).