What makes a conversation pleasant? How would you talk if your objective was to be as engaging and useful as possible with a person you’ve never met, without appearing annoying? Now with some context: you are a robot, and your role is to guide and help people in a public building. At a loss for words? Why not follow some insights from a set of preliminary studies in the domain of Conversational AI, also known as Spoken Dialogue Systems [1, 2].

Wait, isn’t Alexa already achieving this?

The answer is no, although research in Spoken Dialogue Systems, i.e. artificial algorithms (recently, AIs) that are able to create dialogue with humans or other agents (robots for example) has been flourishing since the late nineties [3]. A large part of the research has focused on task-oriented systems, where the emphasis is on completing a user’s goal such as flight booking for example. Such conversations are designed to be short and functional in order to support completion of the task, with little or no attempt to entertain or establish a relationship with the user. A smaller focus has been on chatbots, which are designed to promote extended, unstructured conversation more characteristic of human-human interaction, often with no particular task beyond entertaining and engaging the user.

Largely, however, conversational systems remain divided between those classed as task-oriented, and chatbots focused solely on entertainment [4]. This is unnatural, as human conversations usually interleave social content with task-oriented content [5]. Systems such as Amazon’s Alexa, Google’s Assistant, and Samsung’s Bixby provide access to both entertainment and task-based interaction, but these features have to be requested separately and are often limited to jokes that are completed with a single user-system interaction within a well-known environment (the home) and are targeted at a specific known user (the owner).

To create more engaging interactions in more complex situations, it would therefore seem natural that Spoken Dialogue Systems must support both task completion and entertaining social conversation, and allow the user to switch effortlessly between the two. Surprisingly, this hypothesis has not been extensively experimentally tested yet.

How to build such a system?

In this new study performed by the Interaction Lab research team at Heriot-Watt University, 32 users were asked to interact with a newly developed system based on an improvement of their Alexa prize finalist `Alana’ system (www.alanaai.com) [6], guiding and helping them in a building on a university campus. The speciality of this system is to combine state-of-the art open-domain social conversation with task-based assistance relevant to the context. This is a setup that can easily be transferred to other similar situations, such as communal spaces in public or professional buildings, for example elder-care facilities as in the case of the SPRING project.

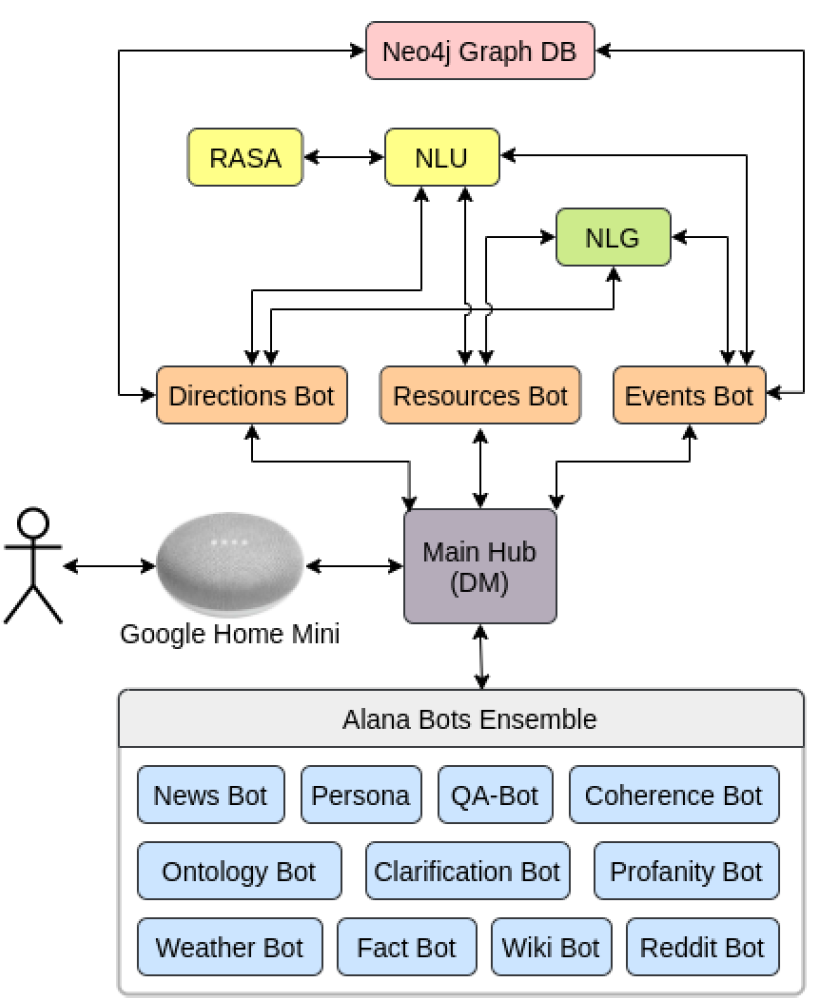

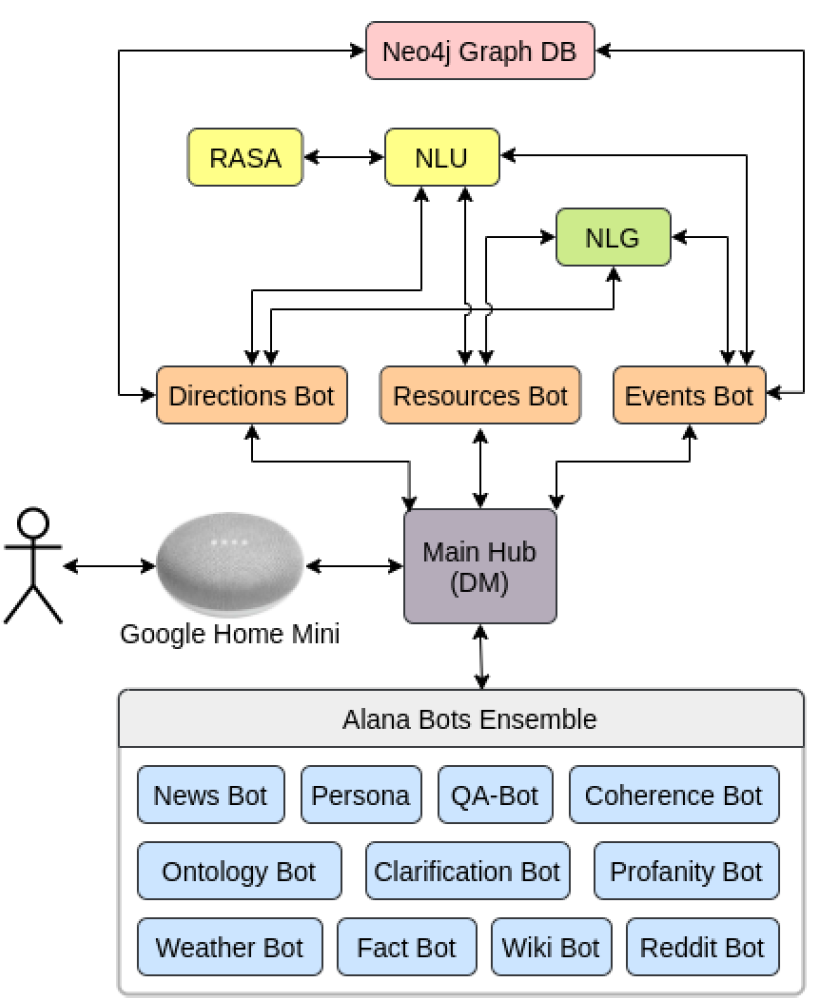

The figure below presents the architecture of the system. The Alana system is an ensemble of data-driven and rule-based conversational bots that compete in parallel to generate a contextually appropriate reply to the user’s utterance. Information Retrieval bots draw on a wide range of information sources to produce their potential replies including Wikipedia, Reddit, and a variety of news feeds on NewsAPI. Rule-based bots are used to respond in a controlled, consistent way to specific user queries e.g. in Persona expressing the views, likes, and dislikes of the virtual personality ‘Alana’. The Coherence Bot provides topic confirmation and conversational ‘drivers’ to progress the conversation when none of the other bots produce a potential response. Automatic Speech Recognition is performed here using Google Speech, which can be deployed on Android phones or Google Home smart speakers, for example. NLU stands for “Natural Language Understanding”. i.e. transforming user utterances into specific meaning representations. In this study, the Alana system’s open-domain NLU is augmented for building-specific Directions, Events, and Resources enquiries, based on the commercially available RASA framework. NLG stands for “Natural Language Generation”, the reverse step of NLU, when specific semantic meaning is encoded into words in a certain order for the user to generate clarifications, directions, and follow-up questions etc.

System Architecture

In addition to its smooth dialogue skills provided by its architecture, the system can guide users with information on the building itself (e.g. facilities, rooms, construction date, opening times), the location of rooms and directions to them, events happening in the building, and computers available for use. The Directions Bot handles requests for locations of rooms and directions to them, the Events Bot handles enquiries about events taking place in the building, while the Resources Bot provides information about how many computers are currently free in the building’s labs. All of these are generated through access to the Neo4j Graph Database, a graph representation of how the rooms are connected with each other.

In order to select which bot answers the user, a selection strategy is used at every interaction. If multiple bots produce a possible response, the response from the bot with the highest priority is selected, or else a trained model can be used [8] . In this system, the building-specific Directions, Events, Resources and Persona Bots are placed at the top of the list, in that order. The remaining bots are prioritised according to the following order:

- Profanity bot

- Fact+Joke bot

- Weather bot

- Persona

- Ontology bot

- Reddit bot

- News bot

- Wiki bot

How is the hypothesis tested?

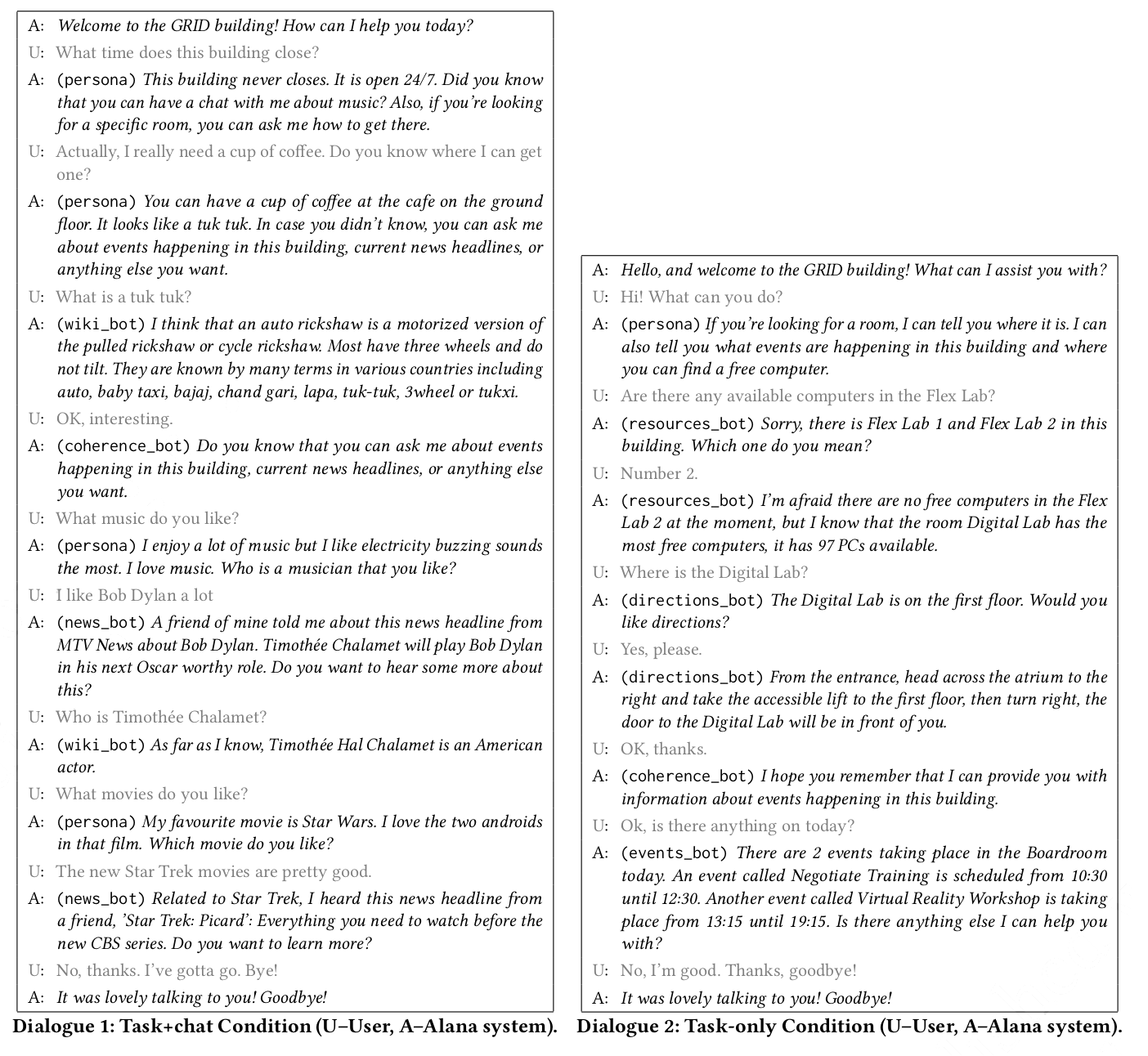

Each of the 32 participants interacted with two versions of the system: one that combines building-specific task-based dialogue with open-domain conversation (task+chat, described above) and a solely task-based system (task-only) deprived of any open-domain conversational ability. In both cases, they were given a set of goals (information to be obtained from the system). To get an idea of how different interactions can be with these two versions, examples of dialogues are given in Figure 2.

Two conversation systems

Following each interaction, participants completed a short Likert questionnaire [7], providing quantitative data on user attitudes. This consists of a set of proposal statements, associated with a five-point scale ranging from “strongly agree” through neutral to “strongly disagree”. At the end of the session they completed an exit questionnaire to determine their preference between versions and gather qualitative information on their experiences, together with demographic data. Sessions lasted a maximum of 30 minutes.

User reaction to different types of conversation

Efficiency

The mean number of conversational turns per interaction, where either the system or user takes a turn at speaking, was very similar in both versions; 7 in the task-only version and 8 in the task+chat version. Despite this, the mean duration per interaction was significantly longer in the task+chat version, indicating the turns themselves in the chat version may last longer. In the task+chat version the mean duration was 141 seconds compared to 94 seconds in the case of the task+only version (+47s, all figures rounded).

However, analysis showed that only twelve of the 32 participants (37.5%) engaged in social chat with the task+chat version. Moreover, just four people accounted for 63.5% of the chat responses. Re-running the above analyses excluding those who took part in social chat showed that the mean duration was still longer in the task+chat version, suggesting that rather than sociable ‘chatty’ responses from the users the increased duration may have been due to the longer system outputs in the task+chat condition, which consistently advertised the social as well as the other capabilities of the system.

Interestingly, across both versions there was a significant number of building-related enquiries that were additional to those supplied in the experiment procedure, and were initiated entirely by participants. A total of 25 participants in the task-only version and 21 in the task+chat version made enquiries of this type. As shown in the table below, participants were willing to go beyond the prescribed goals and engage with the system to ask questions of their own, but even in the task+chat version these were more often related to the building than to social topics.

| User utterance type | Percentage of responses, Task-only version | Percentage of responses, Task+chat version |

| Goal-specific | 36.6% | 36.6% |

| Off-goal, building related | 33.4% | 20.8% |

| Social chat | 0.7% | 14.3% |

Perception and Preference

Although survey results showed little difference between the perception the users had of the two different systems, the only statistically significant feature was that of future expected encounters: participants would be significantly happier to talk to the task-only system again in the future compared to the task+chat version.

When asked which version of the system they preferred, there was a tendency for participants to choose the task-only version (62.5%), but unfortunately insufficient statistical significance over the small sample size. Amongst participants who preferred the task-only system, the most frequent reason given was that it was more direct and/or gave them the information they wanted quicker. Eight participants in this group (40%) referred specifically to the chat features in a negative way, six mentioning the conversation’s building/public setting. On the other hand, amongst those who preferred the task+chat version, six out of ten (60%) explicitly referred to the additional chat features as their reason e.g. ‘more fun’, ‘more intelligent’. However it was still suggested by both preference groups that the task+chat version should advertise its ability to talk about other topics less often.

Alana Bot demo

So what’s the answer?

As often in research, things are not black or white. It appears that creating an engaging, pleasant yet sustainable dialogue is complex, and highly context- and user-dependent. Contrary to the expected positive effect, the inclusion of open domain chat in the dialogue in this specific task-based context seemed to have at best a neutral effect on user perceptions. In terms of dialogue quality, both approaches resulted in equally high levels of task completion, but the offer of social chat did however affect the conversational efficiency, significantly increasing the duration of interactions with the system. Of key interest is the relatively low take-up of the option to engage in social chat. The majority of participants did not engage in social conversation with the system. In contrast, a large proportion asked additional off-task questions relating to the building. This is interesting, as it indicates a willingness to engage freely with the system, but in a way that appears very much influenced by the environment and context.

This highlights the importance of evaluating dialogue systems in context and has important design implications for current work on conversational systems for healthcare support in hospital settings. The SPRING project aims to develop a conversational robot which is intended to provide and facilitate social interaction amongst elderly users; a user group where loneliness and lack of such interaction is increasingly recognised as a concern. The ability to successfully initiate and sustain conversation is key to this aim, and based on the results of this research requires careful handling. In particular, the dialogue management decision problem of when and how much it is good to chat needs to be further investigated.

Source & details:

- [1] Conversational Agents for Intelligent Buildings; Weronika Sieinska, Nancie Gunson, Christopher Walsh, Christian Dondrup, and Oliver Lemon, Proceedings of SIGDIAL 2020 [demo: https://www.youtube.com/watch?v=-gvgA8_B3Xw ]

- [2] It’s Good to Chat? Evaluation and Design Guidelines for Combining Open-Domain Social Conversation with Task-Based Dialogue in Intelligent Buildings, IVA 2020 – Proceedings of the 20th ACM International Conference on Intelligent Virtual Agents.

Further references:

- [3] A. L. Gorin, G. Riccardi, and J. H. Wright. 1997. How May I Help You? Speech Commun. 23, 1–2 (Oct. 1997), 113–127

- [4] Hongshen Chen, Xiaorui Liu, Dawei Yin, and Jiliang Tang. 2017. A Survey on Dialogue Systems: Recent Advances and New Frontiers. SIGKDD Explorations 19 (2017), 25–35.

- [5] Emmanuel A. Schegloff. 1968. Sequencing in Conversational Openings. American Anthropologist 70, 6 (1968), 1075–1095.

- [6] Amanda Cercas Curry, Ioannis Papaioannou, Alessandro Suglia, Shubham Agarwal, Igor Shalyminov, Xinnuo Xu, Ondřej Dušek, Arash Eshghi, Ioannis Konstas, Verena Rieser, et al. 2018. Alana v2: Entertaining and informative open-domain social dialogue using ontologies and entity linking. Alexa Prize Proceedings (2018).

- [7] Rensis Likert. 1932. A technique for the measurement of attitudes. Archives of psychology 140 (1932).

- [8] Igor Shalyminov, Ondrej Dusek, and Oliver Lemon “Neural Response Ranking for Social Conversation: A Data-Efficient Approach”, Search-Oriented Conversational AI: EMNLP-SCAI, 2018